Know Risk.

Know Reward.

Detect unlawful communication

in real time and at scale.

Reduce risk. Act with confidence.

Validated by

One Platform.

Two Core Defenses.

Always-on monitoring or fast, on-demand review — HarmCheck deploys nearly 50 proprietary classifiers to find what matters, when it matters.

Active Listening™

Always-on communication surveillance.

Communication moves fast. Mistakes follow. HarmCheck Active Listening monitors in real time, flagging harmful or unlawful language before it’s sent—so teams can fix it before it becomes liability.

- Email add-in for Outlook

- Flags unlawful language before you hit send

- Nearly 50 categories of harm detected

- Dashboard with real-time alerts

- Restricted List detection

Rapid Deploy™

Crisis response & eDiscovery.

HarmCheck Rapid Deploy analyzes massive datasets in hours, not weeks—saving thousands of review hours and delivering clarity when you need it most.

- Built to handle large datasets

- Processes 20,000+ pages/ hour

- Dashboard view annotated original documents

- Built for fraud, audits, and pre-deposition discovery

- Delivers defensible, court-ready findings — fast

When the Stakes

Are Highest

Three products, one platform — matched to whatever moment you’re in.

What if Citi used HarmCheck before the Lindsey lawsuit?

A 2023 federal complaint alleges years of threats, sexual harassment, and retaliation inside the Equities division. Every category named in the filing is exactly what Active Listening was built to catch — at the moment of draft, before send.

Threats intercepted. Patterns surfaced. Exposure prevented.

Read case studyFair housing case: 246,000 documents reviewed in six days.

In a fair housing suit against a city council accused of blocking a housing development, discovery produced three years of communications. Rapid Deploy ran the corpus at 20,000+ pages per hour — surfacing coded NIMBY language and pretext patterns with sentence-level citations.

Pattern documented. Citations sourced. Depositions ready.

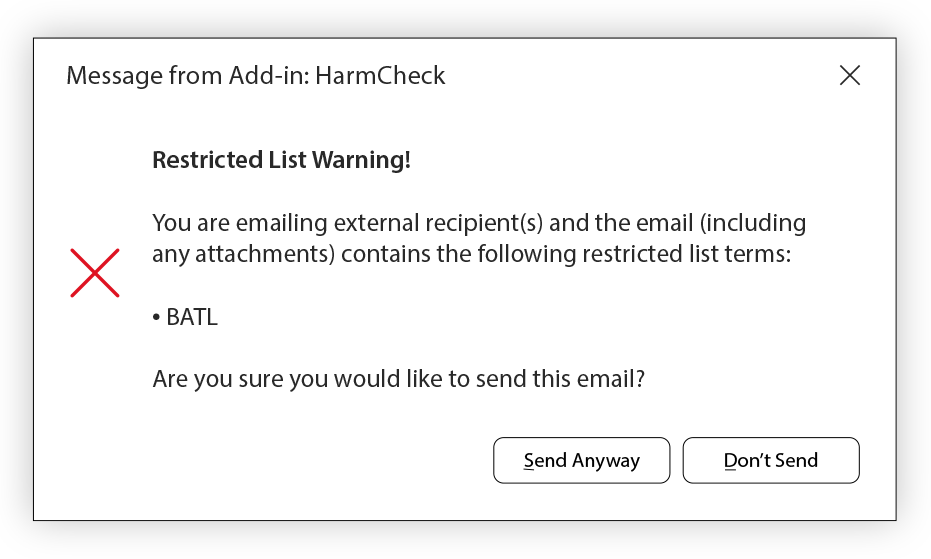

Read case studyBulge-bracket bank stops an MNPI leak before send.

An equity research analyst began drafting a market commentary referencing a name on the firm’s restricted list. Restricted List intercepted the message, blocked the send, and routed it for compliance review — before any external party saw it.

Zero MNPI breach. Zero regulatory disclosure. Zero headline.

Read case studyRestricted List Monitoring

Prevent insider trading and MNPI breaches before they happen. HarmCheck’s Restricted List add-in monitors all internal communications against your firm’s restricted securities list in real time — automatically flagging any reference to restricted tickers, issuers, or related entities the moment they appear in email.

A Complete View of Your Communication Risk

Communication Compliance Dashboard

Whether you use Active Listening, Rapid Deploy, or both—HarmCheck gives you a complete view.

Active Listening shows real-time communication health, giving compliance managers instant visibility into risk as it emerges. Rapid Deploy brings clarity to large-scale datasets as they’re processed.

Processed

240K

Flagged

42

Throughput

170/min

Trend Analysis

Recent Flags

~50 Proprietary Classifiers

Purpose-built and continuously trained to catch nuance, slang, and covert language that keyword blockers and general-purpose tools miss entirely.

- Insider Trading / MNPIMonitors communications for non-public material information that could enable insider trading.

- Document TamperingIdentifies signs of altered or manipulated documents that compromise integrity.

- Sexual HarassmentFlags inappropriate or harassing language to help maintain a safe workplace.

- Covert CommunicationCatches attempts to move conversations to unmonitored or unauthorized channels.

- Fair LendingFlags discriminatory language in lending communications to ensure compliance with ECOA.

- Workplace DiscriminationSurfaces biased or unlawful remarks tied to protected classes in workplace communication.

- Restricted ListPrevents mentions of banned securities, entities, or individuals in communications.

- Threats / IntimidationDetects threatening or coercive statements before they escalate into legal risk.

- + many moreNearly 50 domain-trained classifiers across compliance, conduct, and litigation risk.

Why HarmCheck

Keyword blockers catch the obvious. General-purpose tools weren’t trained for this. HarmCheck was built for one purpose: detecting harm in organizational communications with forensic precision.

Read more about why HarmCheckNot keyword filters

Keywords miss slang, coded language, and implied threats. HarmCheck’s classifiers understand context, intent, and nuance across nearly 50 categories — catching what a keyword list never could.

Not a generic tool

General models aren’t trained on regulatory harm, employment law violations, or MNPI patterns. HarmCheck’s classifiers are purpose-built and continuously refined on the specific language of organizational risk.

Not a human review team

Manual review is slow, expensive, and inconsistent. HarmCheck processes 20,000+ pages per hour with 91%+ accuracy — delivering results in hours that would take a review team weeks to complete.

What industry leaders are saying

Trusted by compliance leaders, legal experts, and enterprise executives.

"HarmCheck is the 'always-on' solution to the risk of problematic communications. This technology has broad application to use cases across all industries."

David Lang

Former Head of Global Compliance, RBC

"HarmCheck matters because careless or intended messages can cause irreparable harm — legally, internally, reputationally, and to a company’s morale and leadership. Catching it before it goes out brings profound value to both enterprises and individuals involved."

Philip Brittan

CEO, CTO & VP, Google

"Unfortunately, discriminatory communication still occurs and is a nightmare for any firm to deal with. HarmCheck provides an effective and modern solution to this compliance problem."

James Wylie

Expert Compliance Lawyer, Formerly, HUD & CFPB

"If anyone can bring a breakthrough communication technology to life, it’s the Alphy team. I’ve seen HarmCheck highlight harmful, unethical, and unlawful language in real-time — it’s stellar."

Karan Gupta

CEO, Voicebox

Built on Trust

We prioritize your trust and privacy above all else. Our tools are designed with you in mind, and safeguarding your information remains central to our values.

Privacy

We mind our business. Not yours.

Explore the measures we implement to secure data and ensure the protection of your information.

Learn moreSecurity

Industry standard security infrastructure.

Backed by SOC 2, CCPA, ISO/IEC 27018:2019, ISO/IEC 27017:2015, FERPA, and PCI DSS — learn how we strengthen our products.

Learn moreResponsible AI

A commitment to helpful and responsible AI.

Learn how we conscientiously develop Alphy’s technology to benefit and help all.

Learn moreDetect unlawful communication in real time and at scale.

Join the enterprises using HarmCheck to secure their communications.